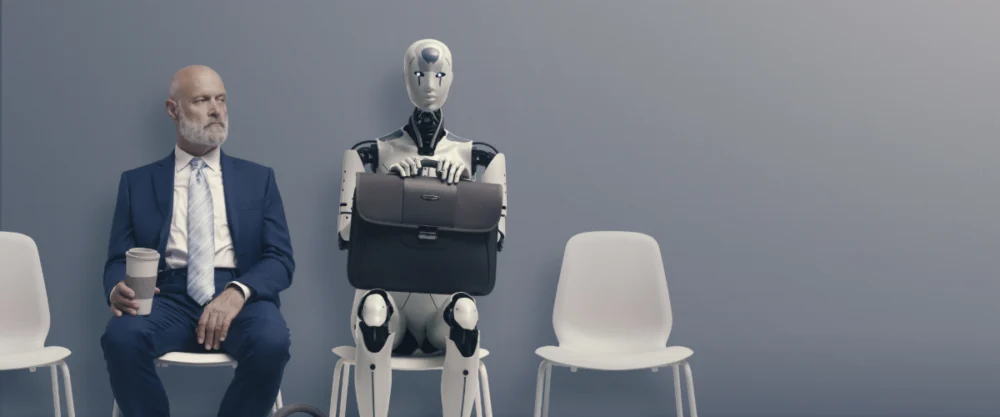

The transition from 2024 to 2025 was marked by an unprecedented level of optimism within the technology sector regarding the evolution of artificial intelligence. Prominent leaders in the field did not merely suggest incremental improvements; they predicted a fundamental shift in the nature of labor. Sam Altman, CEO of OpenAI, set the stage in late 2023 and throughout 2024 by asserting that 2025 would be the year AI agents would "join the workforce" and materially alter the economic output of global corporations. This sentiment was echoed by OpenAI’s Chief Product Officer, Kevin Weil, who characterized 2025 as the pivotal moment when AI would transition from a "super smart thing" used for consultation to a proactive entity capable of performing complex real-world tasks, such as managing administrative paperwork and navigating travel logistics.

However, as 2025 draws to a close, the data and market realities present a starkly different narrative. The "digital labor revolution" that was expected to disrupt traditional employment structures has largely failed to materialize. Instead of a workforce populated by autonomous digital employees, the industry has encountered a series of technical bottlenecks, reliability issues, and a widening gap between speculative "vibes" and demonstrable capabilities. The failure of AI agents to achieve mass integration in 2025 serves as a significant case study in the limitations of current large language model (LLM) architectures and the dangers of over-extrapolating from niche successes.

The Architecture of Expectation: Defining the AI Agent

To understand the magnitude of the 2025 shortfall, it is necessary to distinguish between the generative AI tools of 2023—primarily chatbots like ChatGPT and Claude—and the "agents" promised for 2025. A standard chatbot is reactive; it processes a prompt and generates a text-based response. In contrast, an AI agent is designed to be agentic, meaning it can break down a high-level goal into a series of sub-tasks, navigate various software interfaces, make autonomous decisions, and persist until a project is completed.

The industry’s optimism was rooted in the success of specialized tools like Claude Code and OpenAI’s Codex. These systems demonstrated a high degree of proficiency in computer programming, a domain characterized by rigid logic, clear syntax, and immediate feedback loops. Because these agents could successfully debug code and build software components, many industry leaders, including Salesforce CEO Marc Benioff, assumed this capability would naturally generalize to the messy, unstructured environments of general office work. Benioff went as far as to predict a "trillion-dollar" revolution in digital labor, envisioning a world where agents handled customer service, sales, and operations with minimal human oversight.

A Chronology of the 2025 AI Trajectory

The timeline of AI development in 2025 reveals a trajectory of high-profile launches followed by public performance failures. In the first quarter of the year, major labs released their "agentic" iterations, with ChatGPT Agent being the most anticipated. These products were marketed as the next step in workplace productivity, capable of interacting with web browsers and legacy software systems just as a human would.

By the second quarter, however, reports from early adopters began to highlight significant friction. Unlike coding environments, where the rules are consistent, the "real world" of the internet and corporate intranets proved too volatile for LLM-based agents. In one documented instance, a high-profile agentic tool spent nearly fifteen minutes attempting to select a single value from a real-word real estate website’s drop-down menu, failing because it could not reconcile the visual layout of the site with its internal logic.

By mid-2025, the narrative began to shift from "imminent revolution" to "technical refinement." Major tech journals began reporting on the "reliability ceiling." While an agent might succeed at a five-step task 90% of the time, the mathematical reality of multi-step processes meant that for a twenty-step project, the likelihood of a total failure approached 90%. In a corporate setting, a digital employee that fails nine times out of ten is not an asset; it is a liability.

Technical Bottlenecks and the Reliability Gap

The primary reason for the 2025 stagnation lies in the underlying technology. Most current AI agents are built on top of Large Language Models, which function through probabilistic next-token prediction. While this is effective for generating coherent text, it lacks the robust "world model" required for complex planning and error correction.

Gary Marcus, a cognitive scientist and a prominent critic of current AI trends, has noted that developers are essentially "building clumsy tools on top of clumsy tools." The layers of software designed to give LLMs agency often struggle with "hallucinations" of a different sort—not just making up facts, but making up actions. An agent might "believe" it has clicked a button when it has actually refreshed the page, leading to a loop of unproductive activity.

Furthermore, Andrej Karpathy, a co-founder of OpenAI and a leading figure in AI research, recently acknowledged that the industry had succumbed to "overpredictions." In a recent public discussion, Karpathy suggested that rather than 2025 being the "Year of the Agent," we are likely entering the "Decade of the Agent." This admission signals a retreat from the idea that autonomous digital workers are a solved problem, suggesting instead that years of foundational research into reasoning and reliability are still required.

Dissecting the Economic and Social Reaction

The lack of a 2025 AI breakthrough has led to a cooling of the "hype cycle" that had dominated Silicon Valley for the previous 24 months. While some figures, such as Sal Khan of Khan Academy, continue to publish op-eds predicting massive worker displacement, the evidence provided for such claims remains anecdotal and limited. Khan’s assertions that AI agents could replace 80% of call center employees are often countered by the reality of implementation: companies that have attempted to replace human staff with agents have frequently faced customer backlash and technical failures.

The slow progress of other automated technologies provides a sobering parallel. Waymo, Google’s self-driving car division, is often cited as a success story in AI agency. However, Waymo’s expansion remains incredibly slow and capital-intensive, requiring high-resolution mapping of every street and a massive infrastructure of remote human monitors. This "human-in-the-loop" requirement contradicts the vision of a low-cost, high-speed digital labor revolution. If a self-driving car requires years of hand-mapping a single city, a digital agent capable of navigating the infinite variety of corporate workflows will likely require a similar, if not greater, level of bespoke engineering.

Market Implications: From Speculation to Utility

As we move into 2026, the focus of the technology sector is shifting. The era of reacting to "vibes" and bold predictions from charismatic CEOs appears to be waning. Investors and corporate leaders are increasingly demanding "proof of utility" rather than "proof of concept."

The broader impact of this shift is twofold:

- Pragmatic Integration: Companies are moving away from the idea of "replacing" workers with agents and are instead focusing on "augmentation." This involves using AI to handle specific, high-volume tasks where the environment is controlled, such as data entry or basic research, rather than assigning it the role of an autonomous project manager.

- Focus on Robustness: The research community is pivoting toward solving the "brittleness" of AI. This includes developing better ways for AI to ask for help when it is confused and improving its ability to remember and learn from past mistakes within a single session.

Conclusion: The Path Toward 2026

The failure of AI to "join the workforce" in 2025 is not an indictment of the technology’s potential, but rather a correction of an unrealistic timeline. The predictions made by Altman, Weil, and Benioff underestimated the complexity of the "last mile" in AI development—the transition from a system that sounds smart to a system that acts reliably.

For 2026, the industry is expected to adopt a more measured approach. The goal is no longer to predict when AI will take over our lives, but to understand how to use the capabilities that currently exist. The "Year of the Agent" may have been a misnomer, but the "Decade of the Agent" remains a possibility. The lesson of 2025 is that in the world of high technology, there is no substitute for reliability, and "vibes" are an insufficient foundation for a global economic revolution. The focus now turns to building the rigorous, stable architectures that can actually perform the work they were promised to do.