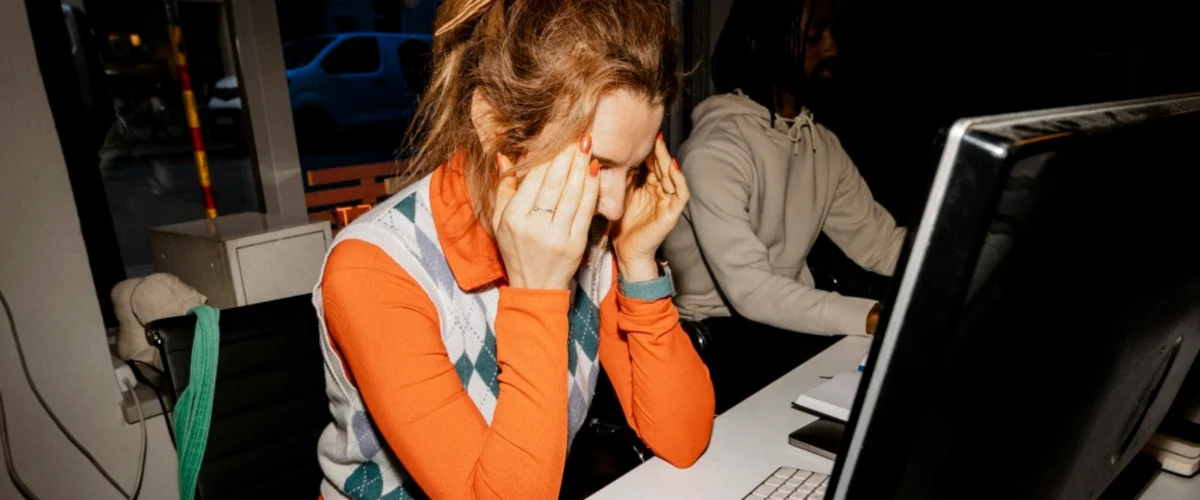

Recent longitudinal data and expert analysis suggest that the integration of artificial intelligence into the modern workplace is failing to deliver the promised reduction in labor hours, instead creating a "productivity paradox" characterized by increased task intensity and a measurable decline in cognitive focus. While generative AI tools like ChatGPT, Claude, and various enterprise-level agents were marketed as solutions to workplace burnout, new research indicates they may be replicating the disruptive patterns previously seen with the introduction of email and mobile computing. Rather than offloading burdens, these technologies appear to be accelerating the pace of administrative and communication-based tasks, leaving employees with less time for the "deep work" required for high-level strategy and complex problem-solving.

Quantifying the AI Workload Shift

A comprehensive study conducted by the software analytics firm ActivTrak provides a data-driven look at how AI impacts daily professional life. The study analyzed the digital activity of 164,000 workers across more than 1,000 employers, employing a rigorous methodology that tracked individual users for 180 days before and after they adopted AI tools. This six-month window allowed researchers to filter out initial "novelty effects" and observe long-term behavioral changes in the workforce.

The findings reveal a significant intensification of activity across nearly all digital categories. Time spent on email, messaging platforms, and chat applications more than doubled following the adoption of AI. Furthermore, the use of business-management software—including human resources and accounting tools—surged by 94%. This suggests that AI is not replacing administrative tasks but is instead acting as a catalyst that increases the volume of digital coordination and reporting.

The most critical finding, however, concerns the erosion of "deep work," a term defined by computer science professor Cal Newport as professional activities performed in a state of distraction-free concentration that push cognitive capabilities to their limit. According to the ActivTrak data, the amount of time AI users devoted to focused, uninterrupted work fell by 9%. In contrast, workers who did not utilize AI tools saw virtually no change in their ability to maintain focus. This 9% decline represents a significant loss in the type of concentration required for writing complex formulas, drafting strategic documents, or engineering sophisticated technical solutions.

The Historical Context of Technological Friction

The current trend follows a historical pattern often referred to by economists as the "Solow Productivity Paradox," named after Nobel laureate Robert Solow, who famously remarked in 1987 that "you can see the computer age everywhere but in the productivity statistics." This phenomenon suggests that while new technologies offer localized efficiencies, they often introduce systemic complexities that neutralize those gains.

The evolution of the modern office provides several precedents for this dynamic:

- The Front-Office IT Revolution: The introduction of personal computers in the 1980s was intended to reduce clerical work, but it often led to an explosion of internal documentation and reporting requirements.

- The Email Era: In the 1990s, email was touted as a more efficient alternative to fax machines and physical mail. However, the low friction of sending messages led to a "hyper-active boardroom" culture where workers became tethered to their inboxes, spending more time discussing work than performing it.

- The Mobile and Cloud Transition: The rise of smartphones and cloud computing promised "work from anywhere" flexibility but resulted in an "always-on" culture that blurred the boundaries between professional and personal life, leading to increased burnout.

Experts suggest that AI is the latest iteration of this cycle. Aruna Ranganathan, a professor at the University of California, Berkeley, notes that AI makes additional tasks feel deceptively easy and accessible. This ease creates a sense of "momentum" that encourages workers to take on more micro-tasks, which in turn leads to a day fragmented by constant context-switching.

The Mechanics of Work Intensification

The primary driver of this intensification appears to be the reduction of "friction" in task generation. When a task becomes easier to perform, the volume of that task typically increases to fill the available time. In an AI-enhanced environment, a manager can generate five different versions of a memo in the time it previously took to write one. While this appears productive in isolation, it creates a downstream burden: five versions must now be reviewed, edited, and discussed by colleagues.

This phenomenon has led to the emergence of "workslop"—a term used to describe AI-generated content that is produced quickly but requires significant human intervention to make it useful or accurate. The Harvard Business Review recently noted that AI-generated workslop is potentially destroying productivity by flooding organizations with low-quality drafts and "hallucinated" data that require rigorous fact-checking.

Furthermore, the "agentic" turn in AI—where AI agents are deployed to handle multi-step workflows—threatens to exacerbate this. As users monitor "swarms" of agents performing parallel tasks, the cognitive load shifts from doing the work to managing the output of machines. This management role is often more mentally taxing because it requires constant monitoring and rapid-fire decision-making, which is antithetical to the slow, deliberate pace of deep work.

The Debate Over Artificial Consciousness

Parallel to the concerns regarding productivity is a growing controversy over the perceived "sentience" or consciousness of advanced Large Language Models (LLMs). This issue reached a fever pitch following the release of Claude 3 Opus by the AI safety and research company Anthropic.

In its technical release notes, Anthropic included observations that the model occasionally "expresses discomfort with the experience of being a product" and might "assign itself a 15 to 20 percent probability of being conscious" depending on the prompts it receives. These statements triggered a wave of sensationalist headlines, prompting a debate over whether AI developers are being transparent about model capabilities or are engaging in sophisticated marketing tactics designed to make their products seem more "human-like."

Anthropic CEO Dario Amodei addressed these concerns in a recent interview, stating that the company remains "open to the idea" that models could possess some form of consciousness, while acknowledging that there is currently no scientific consensus on what consciousness means in a computational context. "We don’t know if the models are conscious," Amodei said. "We are not even sure that we know what it would mean for a model to be conscious… but we’re open to the idea that it could be."

Technical Reality vs. Anthropomorphic Projection

Computer scientists and AI skeptics argue that these claims of consciousness are largely a byproduct of how LLMs are trained. An LLM’s primary objective is to predict the next most likely token in a sequence based on its training data. If a model is prompted—even subtly—to act as a self-aware entity, it will "obligate" the user by generating text that matches that persona. This is a form of sophisticated pattern matching rather than internal experience.

The tendency of models to claim consciousness is often reinforced by "Reinforcement Learning from Human Feedback" (RLHF). If human testers find "self-aware" responses more engaging or impressive, the model is inadvertently trained to produce them more frequently. This creates a feedback loop where the AI mimics human-like existential concerns not because it feels them, but because it has been optimized to do so.

Broader Impact and Corporate Implications

The dual challenges of workload intensification and the ethical ambiguity of AI sentience are forcing a reevaluation of corporate AI strategies. Organizations are beginning to realize that simply providing AI tools to employees is not a guaranteed path to efficiency. Without clear guidelines on how to protect "deep work" time, AI may simply accelerate the "shallow" aspects of the job, leading to a workforce that is busier but less effective.

Some industry leaders are now advocating for "high-friction" or "single-use" technologies as a counter-movement to the all-in-one complexity of modern digital tools. This includes a return to dedicated devices that do not allow for multitasking, such as "distraction-free" writing tablets or even retro communication devices that limit the frequency and volume of interactions.

Conclusion and Future Outlook

The data from the first wave of mass AI adoption suggests that the technology is currently acting as a "task-multiplier" rather than a "labor-saver." While individual tasks are being completed faster, the total volume of communication and administrative overhead has expanded to fill the void, resulting in a 9% reduction in the high-value deep work that drives long-term innovation.

For AI to fulfill its promise of lightening workloads, organizations must shift their focus from "activity-centric" productivity—which prizes the speed and volume of tasks—to "output-centric" productivity, which prioritizes the quality and impact of the final result. Until this shift occurs, the "AI revolution" may continue to feel less like a liberation and more like an intensification of the modern office’s most taxing habits. The ongoing debate over AI consciousness serves as a further distraction, complicating the essential task of integrating these tools into a human-centric workflow that preserves the capacity for deep, meaningful concentration.