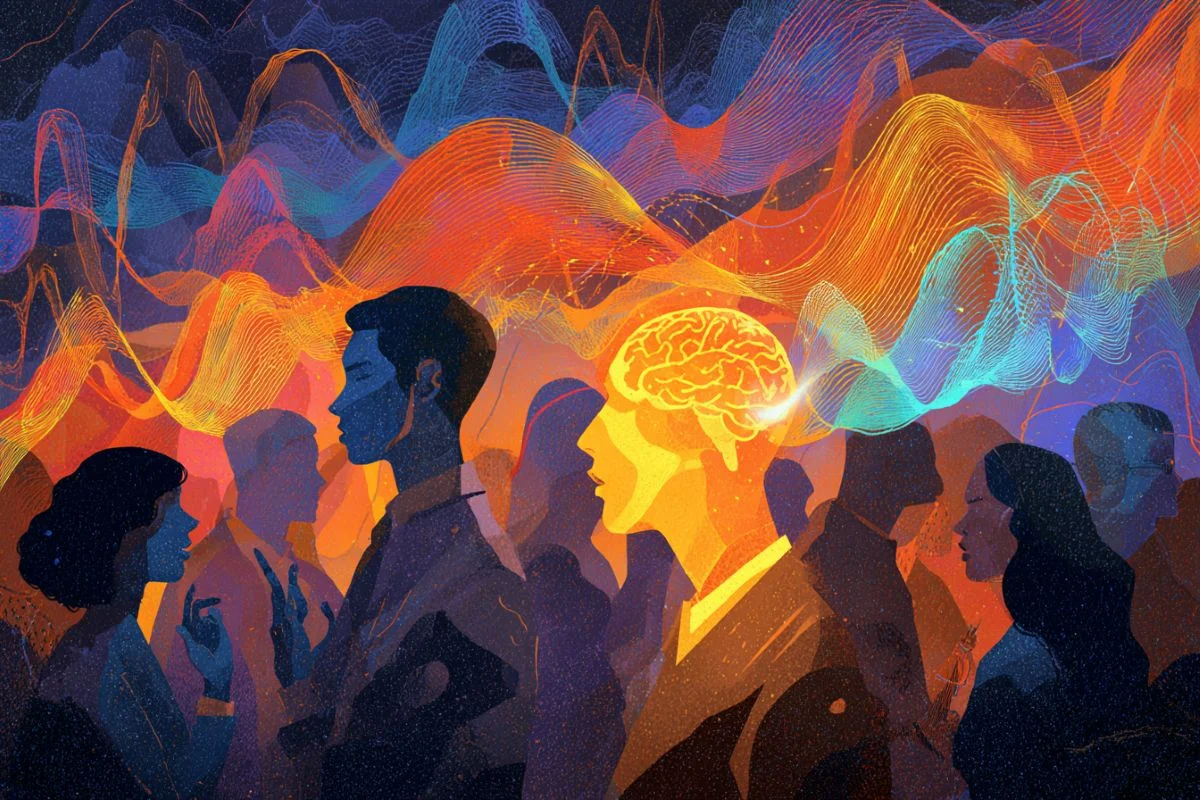

For decades, neuroscientists have been captivated by a ubiquitous yet perplexing human ability: the capacity to focus on a single conversation amidst a cacophony of competing voices, a phenomenon famously dubbed the "cocktail party problem." This intricate act of auditory selective attention, while seemingly effortless for most individuals, has long eluded a comprehensive computational explanation. Now, groundbreaking research from a team at the Massachusetts Institute of Technology (MIT) has finally provided a compelling answer, demonstrating that a simple yet powerful neural mechanism — "multiplicative gain" — is the fundamental key to this remarkable cognitive feat.

The MIT study, published recently in the esteemed journal Nature Human Behavior, utilized a sophisticated, modified neural network model of the auditory system. This innovative approach revealed that by selectively amplifying the activity of neurons tuned to specific features of a target voice, such as its pitch or spatial location, the brain can effectively boost that voice to the forefront of conscious attention. Far from merely identifying sounds, the computational model exhibited a striking fidelity to human perception, accurately mimicking both the successes and characteristic errors people make when attempting to isolate a voice in a noisy environment. This convergence of computational modeling and human behavioral data provides robust evidence that the brain’s ability to selectively turn up the "volume knob" on desired vocal features is indeed the underlying mechanism for focused listening.

The Enduring Mystery of the Cocktail Party Problem

The "cocktail party problem" was first formally identified and described in 1953 by cognitive scientist Colin Cherry. Through a series of experiments, Cherry highlighted the remarkable human capacity to attend to one auditory stream while largely filtering out others, even when those streams possessed similar physical characteristics. This seminal work laid the foundation for decades of research into selective attention, a cornerstone of cognitive neuroscience. The challenge, however, lay in understanding the precise neural mechanisms that enabled this selective processing.

Prior to Cherry’s work, the prevailing understanding of auditory processing often focused on the passive reception and basic decoding of sound waves. The "cocktail party problem" introduced the critical element of active, top-down control over sensory input. Researchers grappled with questions of how the brain distinguishes between relevant and irrelevant auditory information, when this filtering occurs in the auditory pathway (early vs. late selection theories), and what neural computations are involved in boosting the signal of a desired sound while suppressing competing noise.

Early neurophysiological studies began to shed light on potential mechanisms. Observations in both humans and animals revealed that when an individual focused on a particular auditory stimulus, neurons in the auditory cortex that were responsive to the features of that target stimulus — such as its specific frequency, timbre, or spatial origin — exhibited an amplified firing rate. This amplification, often referred to as "multiplicative gain," suggested that the brain wasn’t just passively waiting for the loudest signal, but was actively enhancing the neural representation of the desired input. However, whether this observed neural boost was sufficient to explain the complex behavioral phenomenon of selective listening remained an open question. Computational models of perception, while adept at identifying clear, unambiguous sounds, consistently struggled to replicate the human ability to attend to a specific sound within a complex, multi-source acoustic environment. This gap between neurophysiological observation and computational replication represented a significant hurdle in fully understanding selective attention.

A Computational Breakthrough: The Multiplicative Gain Hypothesis

The MIT team, led by senior author Josh McDermott, a professor of brain and cognitive sciences, and lead author Ian Griffith, a graduate student, set out to bridge this gap. Their core hypothesis was that the previously observed multiplicative gain in neural activity was not merely a correlative phenomenon but a causal mechanism sufficient to explain human selective attention. To test this, they embarked on designing a computational model capable of simulating this neural amplification.

Their approach began with an established neural network architecture commonly used to model auditory processing. The crucial modification involved integrating the concept of multiplicative gains at each processing stage of the network. This meant that the activation of individual processing units within the model could be dynamically scaled up or down based on the specific auditory features they represented. For example, units tuned to a low pitch could be boosted while those tuned to a high pitch could be attenuated, or vice versa, depending on the target.

The training regimen for this modified network was ingeniously designed to mimic human attentional cues. In each trial, the model was first presented with a "cue" – a short audio clip of the specific voice it needed to attend to. The neural activations generated by this cue then served to determine the multiplicative gains that would be applied to subsequent auditory inputs. "Imagine the cue is an excerpt of a voice that has a low pitch," explains Griffith. "Then, the units in the model that represent low pitch would get multiplied by a large gain, whereas the units that represent high pitch would get attenuated."

Following the cue, the model was presented with a "cocktail party" scenario: a mixed audio clip containing multiple voices, including the target voice, and tasked with identifying a specific word spoken by the target. The model’s activations in response to this complex mixture were then processed through the previously set multiplicative gains. The critical question was whether this selective amplification would be sufficient to enable the model to isolate and identify the target voice, replicating human-like attentional behavior.

Mimicking Human Perception and Flaws

The results were remarkably consistent with human performance. Under a wide array of conditions, the MIT model not only successfully performed the selective listening task but also exhibited patterns of errors strikingly similar to those made by human listeners. For instance, just like humans, the model struggled more when trying to differentiate between two voices with similar characteristics, such as two male voices or two female voices, which often share overlapping pitch ranges. This fidelity to human error, rather than just success, provided powerful validation for the multiplicative gain hypothesis. "We did experiments measuring how well people can select voices across a pretty wide range of conditions, and the model reproduces the pattern of behavior pretty well," Griffith noted, underscoring the model’s accuracy in capturing the nuances of human auditory attention.

This finding suggests that the "volume knob" mechanism isn’t perfect, and its limitations directly mirror our own. When the target and distractor voices share too many similar features—be it pitch, timbre, or rhythm—the brain’s selective amplification struggles to isolate the desired signal effectively, leading to attentional failures. This insight moves beyond simply explaining how we can hear selectively, to also explaining why we sometimes fail to do so.

Unveiling New Auditory Insights: The Spatial Dimension

Beyond voice characteristics, spatial location is a well-established critical cue for human auditory attention. Our ability to localize sounds in space, primarily through differences in arrival time and intensity at each ear (interaural time differences, ITDs; and interaural level differences, ILDs), significantly aids in segregating sound sources. The MIT team’s model successfully learned to leverage spatial location for attentional selection, demonstrating improved performance when the target voice was positioned at a different location from the distracting voices.

A particularly exciting aspect of this research was the model’s utility as an "engine for discovery." The researchers exploited the computational efficiency of their model to test an exhaustive range of spatial configurations – a feat that would be prohibitively time-consuming and resource-intensive with human subjects. By systematically varying target and distractor locations across both horizontal and vertical planes, the model revealed a novel and striking pattern: it was significantly more effective at selecting the target voice when the sounds were separated in the horizontal plane (left-right) compared to the vertical plane (up-down).

Intrigued by this computational prediction, the MIT team then designed a follow-up experiment with human participants to validate this finding. The human behavioral data precisely mirrored the model’s prediction: people indeed found it much harder to attend to a voice when the spatial separation was vertical rather than horizontal. This discovery highlights a fundamental asymmetry in human spatial auditory processing, likely rooted in evolutionary pressures where tracking sounds horizontally (e.g., predators, prey, fellow humans on the ground) was far more critical than vertical sound localization. As McDermott articulated, "That was just one example where we were able to use the model as an engine for discovery, which I think is an exciting application for this kind of model." This demonstrates the power of well-designed computational models not just to explain known phenomena, but to generate testable hypotheses that lead to new scientific insights.

From Lab to Life: Real-World Implications

The implications of this research extend far beyond theoretical neuroscience, promising tangible advancements in several real-world applications. One of the most immediate and impactful areas is the development of improved assistive listening devices, particularly cochlear implants. Current cochlear implants, while revolutionary for restoring hearing, often struggle in noisy, multi-speaker environments. Users frequently report difficulty distinguishing individual voices amidst background chatter, a direct manifestation of the "cocktail party problem."

The MIT model provides a computational blueprint for how the brain achieves selective listening. By incorporating the principles of multiplicative gain into the signal processing strategies of cochlear implants, it may be possible to design devices that don’t just amplify all incoming sounds, but intelligently identify and boost the features of a desired speaker while attenuating distractors. This could dramatically enhance the quality of life for individuals relying on these devices, allowing them to participate more fully in social interactions and navigate complex auditory landscapes with greater ease. The research team is actively pursuing this application, hoping their findings can lead to significant improvements in implant technology.

Beyond medical devices, the insights gained from this study hold immense potential for advancements in artificial intelligence and machine learning, particularly in the realm of speech recognition. While modern AI assistants can accurately process spoken commands in quiet environments, their performance often degrades significantly in noisy, multi-speaker settings. The multiplicative gain mechanism offers a powerful paradigm for developing more robust and human-like AI speech processing systems. Imagine virtual assistants that can selectively attend to your voice in a crowded room, or intelligent meeting transcription services that can accurately segregate and transcribe individual speakers even when they overlap. This research could pave the way for a new generation of AI that is not just reactive but intelligently selective in its auditory perception.

Furthermore, this work contributes to a deeper understanding of human cognition itself. It provides strong evidence for a "late selection" mechanism in auditory attention, where significant processing of all auditory inputs occurs before selective amplification directs attention to the most relevant information. This contrasts with "early selection" theories that posited filtering occurs much earlier in the sensory pathway. The model’s ability to reproduce the entire "phenotype" of human auditory attention, from its successes to its characteristic failures and spatial quirks, offers a unifying framework for understanding how our brains navigate the complex acoustic world.

A New Era in Auditory Neuroscience

The MIT study represents a significant leap forward in auditory neuroscience. By providing a compelling computational explanation for the "cocktail party problem," it transforms a longstanding neuroscientific mystery into a quantifiable, testable mechanism. The elegance of the "multiplicative gain" hypothesis lies in its simplicity and its remarkable explanatory power, accounting for a broad spectrum of human attentional behaviors.

The research not only validates decades of neurophysiological observations but also offers a powerful new tool for scientific discovery. The model’s ability to predict novel human attentional effects, subsequently confirmed through human experiments, underscores its potential as a simulation platform for exploring the intricate workings of the brain. As computational power continues to advance, such models will become increasingly vital for understanding complex neural processes that are difficult to probe directly in biological systems.

Funded by the National Institutes of Health, this work by Ian M. Griffith, R. Preston Hess, and Josh H. McDermott, published in Nature Human Behavior, marks a pivotal moment. It opens new avenues for research into the neural underpinnings of attention, perception, and consciousness, while simultaneously laying the groundwork for innovative technological solutions that could profoundly impact human lives. The brain’s "volume knob" is no longer just a metaphor; it is now a computationally defined mechanism, offering a clearer picture of how we tune into the world around us.

About this auditory neuroscience research news

Author: Sarah McDonnell

Source: MIT

Contact: Sarah McDonnell – MIT

Image: The image is credited to Neuroscience News

Original Research: Open access.

“Optimized feature gains explain and predict successes and failures of human selective listening” by Ian M. Griffith, R. Preston Hess & Josh H. McDermott. Nature Human Behavior

DOI: 10.1038/s41562-026-02414-7

Abstract

Optimized feature gains explain and predict successes and failures of human selective listening

Attention facilitates communication by enabling selective listening to sound sources of interest. However, little is known about why attentional selection succeeds in some conditions but fails in others. While neurophysiology implicates multiplicative feature gains in selective attention, it is unclear whether such gains can explain real-world attention-driven behaviour. Here we optimized an artificial neural network with stimulus-computable feature gains to recognize a cued talker’s speech from binaural audio in ‘cocktail party’ scenarios. Though not trained to mimic humans, the model produced human-like performance across diverse real-world conditions, exhibiting selection based both on voice qualities and on spatial location as well as selection failures in conditions where humans tended to fail. It also predicted novel attentional effects that we confirmed in human experiments, and exhibited signatures of ‘late selection’ like those seen in human auditory cortex. The results suggest that human-like attentional strategies naturally arise from the optimization of feature gains for selective listening.