The deployment of robotic swarms for complex, time-sensitive tasks, such as environmental cleanup, advanced manufacturing, or intricate logistical operations, holds immense promise for the future. However, a persistent challenge has plagued the optimization of these collective systems: the "too many cooks" problem. Initially, increasing the number of robots assigned to a task enhances speed and efficiency, adhering to the principle that many hands make light work. Yet, a critical tipping point is often reached where additional robots cease to be an asset and instead become a hindrance, crowding the operational space, creating bottlenecks, and ultimately grinding the entire operation to a standstill. This phenomenon, where collective efficiency declines due to excessive density and interaction, represents a significant barrier to scalable autonomous systems.

Harvard applied mathematicians, led by a team from the lab of L. Mahadevan, the Lola England de Valpine Professor of Applied Mathematics, Organismic and Evolutionary Biology, and Physics, have recently unveiled an elegant and counterintuitive solution to this pervasive problem. Their groundbreaking study, published in the Proceedings of the National Academy of Sciences, demonstrates that by introducing a calculated amount of "noise" or randomness into the individual movements of robots, permanent traffic jams can be averted, allowing the swarm to self-organize and achieve maximum efficiency. This research, spearheaded by applied mathematics Ph.D. student Lucy Liu and co-advised by SEAS Senior Research Fellow Justin Werfel, presents a compelling case for decentralized, stochastic control in complex multi-agent systems.

The Dynamics of Congestion: Why More Can Mean Less

The concept of congestion is not new to robotics or human systems. From urban traffic snarls to overcrowded pedestrian walkways, the principle is the same: when the density of agents exceeds a certain threshold within a fixed area, their individual paths inevitably intersect and impede one another. In robotic swarms, this issue is exacerbated by the often-deterministic nature of their programming. Robots are typically designed to follow the most direct, efficient path to their goal. While seemingly logical for an individual unit, this collective adherence to straight-line trajectories in a crowded environment leads to predictable and persistent blockages. Imagine a fleet of cleaning robots in a warehouse, all programmed to move from point A to point B via the shortest route. As their numbers grow, they become immobile "brick walls" to each other, forming static queues and preventing any progress.

Traditional approaches to mitigating this involve sophisticated central control systems that attempt to plot collision-free paths for every single robot. However, the computational complexity of such a task scales exponentially with the number of robots. Calculating and updating optimal paths for hundreds or thousands of interacting agents in real-time is computationally prohibitive and often impossible, especially in dynamic environments where conditions change rapidly. This inherent complexity highlights the need for a simpler, more robust solution, one that does not rely on a supercomputer orchestrating every micro-movement.

The "Noise" Solution: Embracing Randomness for Fluidity

The Harvard team’s research offers precisely such a solution: strategic randomness. The core idea revolves around giving each robot a specific, tunable amount of "wiggle" in its path. Instead of rigid, straight-line movements, robots are allowed to deviate slightly, to "zigzag" or pivot, even if it means their immediate path is not the most direct. This might seem counterproductive at first glance – why introduce inefficiency to improve efficiency? Liu explains that this counterintuitive approach simplifies the problem significantly. "When you have a lot of randomness," she noted, "it becomes possible to take averages – average distances, average times, average behaviors. This makes it a lot easier to make predictions." By treating each individual as a simple agent with a tunable amount of "noise," the mathematical analysis of crowd density, which is notoriously complex due to the myriad possible paths and interactions, becomes more manageable.

This "wiggle" acts as a form of dynamic adaptability. In a crowded space, a robot moving in a perfectly straight line becomes an immovable obstacle to others. A robot with a slight amount of random movement, however, can subtly shift, pivot, and slide around existing obstacles. This transforms what would otherwise be a permanent traffic jam into a fluid, albeit momentarily chaotic, moving crowd. The key is finding the "Goldilocks zone" – an optimal level of noise that is neither too little (leading to gridlock) nor too much (leading to aimless wandering and overall inefficiency). In this sweet spot, robots might bump into each other and form short-lived, transient jams, but the inherent randomness allows them to quickly dislodge and slip past, maintaining the overall flow of the swarm.

A Multi-Pronged Approach: Simulations, Theory, and Physical Validation

The scientific rigor of the study stemmed from its comprehensive methodology, combining mathematical theory, extensive computer simulations, and real-world robotic experiments.

1. Mathematical Framework: The theoretical foundation sought to model the collective behavior of agents with varying degrees of stochasticity. By analyzing the interplay of agent density and movement randomness, the researchers aimed to predict optimal conditions for goal attainment. The complexity of modeling individual interactions was bypassed by leveraging the power of statistical averages, a technique made possible by the introduction of sufficient randomness.

2. Computer Simulations: To test their theoretical hypotheses, the team developed sophisticated computer simulations of robotic fleets. In these simulations, each "agent" started at a random position and was assigned an equally random goal location. Crucially, once a robot reached its destination, it was immediately assigned a new one, mimicking continuous operational scenarios like those found in logistics or environmental monitoring. The simulations allowed for precise control over the "noise" parameter. With zero noise, robots moved in perfectly straight lines. As noise increased, their paths became more erratic, from slight deviations to pronounced zigzagging.

The simulations yielded critical observations:

- Zero Noise: Agents beelining towards goals invariably led to severe, persistent traffic jams, with the entire system locking up.

- High Noise: While traffic jams ceased, the excessive wandering made the robots highly inefficient, significantly extending the time to reach goals.

- Optimal Noise ("Goldilocks Zone"): A specific range of noise levels allowed for dynamic, short-lived jams that quickly resolved, ensuring continuous flow and maximizing the "goal attainment rate" – the number of goal destinations reached per unit of time.

These observations were then used to build mathematical formulas that could approximate the goal attainment rate, enabling the computation of optimal crowd density and noise levels to maximize overall output.

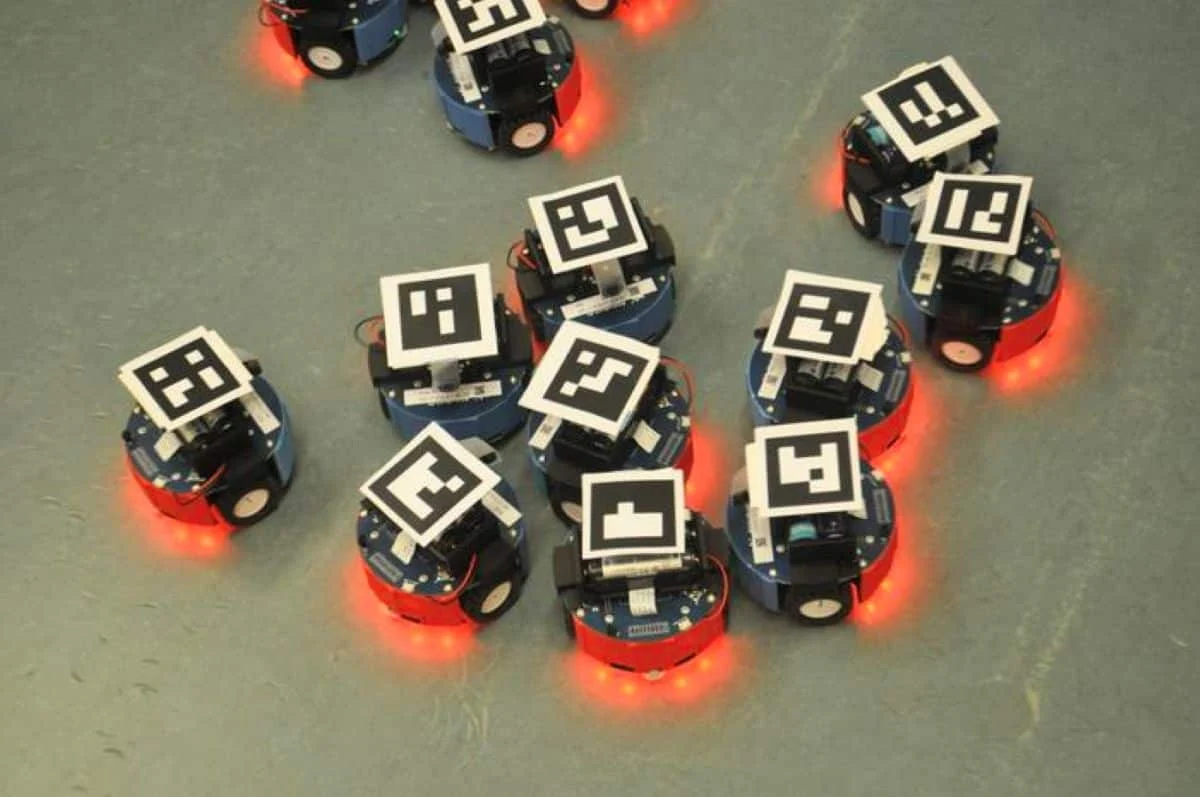

3. Physical Robotic Experiments: To bridge the gap between theoretical models and real-world applicability, Liu and her team collaborated with physicist Federico Toschi at Eindhoven University of Technology in the Netherlands. They set up physical swarms of small, wheeled robots in a laboratory environment, equipped with an overhead camera. Each robot carried a QR code, allowing the camera system to track their precise positions and facilitate the reassignment of new goal destinations.

Despite the inherent differences between idealized simulations and the physical world – real robots move and turn more slowly, exhibit minor mechanical imperfections, and face subtle real-world friction – the key emergent behaviors observed in the simulations were strikingly replicated. The physical experiments definitively corroborated the theoretical and computational findings: a calibrated level of randomness in movement indeed prevented permanent gridlock and enhanced the overall efficiency of the robotic swarm.

Beyond Central Control: The Power of Local Rules

One of the most profound insights from this study is the reaffirmation that highly coordinated, efficient task completion in crowded environments does not necessarily require a powerful central computer or ultra-intelligent, individually complex robots. Instead, a simple set of local navigational rules, specifically incorporating a controlled degree of randomness, may be all that is needed. This finding challenges the conventional wisdom that complex problems demand complex solutions, particularly in the realm of robotics and AI.

"Understanding how active matter, whether it is a swarm of ants, a herd of animals, or a group of robots, become functional and execute tasks in crowded environments using the principles of self-organization, is relevant to many questions in behavioral ecology," commented L. Mahadevan. "Our study suggests strategies that might well be much broader than the instantiation we have focused on." This perspective highlights the interdisciplinary nature of the research, drawing parallels between engineered robotic systems and natural biological collectives.

The researchers found that a simple reactive scheme, where individual robots respond to local conditions with a degree of randomness, performs exceptionally well up to moderate densities. Crucially, this decentralized approach is far more computationally efficient than a centralized planner that attempts to calculate every single path for every single agent. This has significant implications for the scalability and real-world deployability of future robotic systems, especially in scenarios where computational resources are limited or real-time adaptability is paramount.

Broader Implications and Future Applications

The implications of this research extend far beyond the realm of robotic swarms, touching upon various facets of science, engineering, and societal organization.

1. Robotics and Automation:

- Logistics and Warehousing: Optimizing the movement of automated guided vehicles (AGVs) in crowded warehouses to prevent bottlenecks and improve throughput. This could revolutionize supply chain efficiency.

- Disaster Response: Deploying swarms of small robots for search and rescue operations in collapsed buildings or hazardous environments, where precise central control might be impossible due to communication failures. The "wiggle" allows them to navigate debris and find paths.

- Modular Assembly and Manufacturing: Coordinating collaborative robots on factory floors to assemble complex products more efficiently, adapting to dynamic changes in the assembly line.

- Exploration: Enhancing the autonomy of robotic probes for planetary exploration or deep-sea mapping, allowing them to cover more ground and avoid getting stuck in challenging terrains.

2. Human Crowd Dynamics:

- Urban Planning and Architectural Design: Applying these principles to design public spaces such as subway stations, airport terminals, concert venues, and sports stadiums to naturally facilitate smoother pedestrian flow and prevent dangerous crushes. Architects could use predictive models to determine optimal "wiggle room" or path configurations.

- Emergency Evacuation: Developing more effective strategies for evacuating large crowds during emergencies, by understanding how slight variations in individual movement or guidance can prevent bottlenecks at exits.

- Traffic Management: While the study focused on discrete agents, the underlying principles could inform new approaches to vehicular traffic management, particularly in dense urban environments, by encouraging dynamic lane changes or slight deviations to prevent gridlock.

3. Behavioral Ecology: The study offers new theoretical frameworks for understanding the self-organization observed in natural systems, such as ant colonies, bird flocks, or schools of fish. These biological swarms exhibit remarkable collective intelligence through simple, local interactions, and the concept of "noise" might be a fundamental mechanism underlying their resilience and efficiency in crowded natural environments.

Lucy Liu expressed her personal motivation, stating her consistent attraction to research focused on the safe design of highly trafficked spaces. This study indeed hints at a future where the dynamics of crowds – be they composed of humans, robots, vehicles, or a mixture – could be mathematically predicted and finely tuned. By understanding the "math of crowd density and wiggle room," engineers and urban planners could design systems and environments that naturally guide individuals into the "Goldilocks zone" of movement, thereby preventing delays, increasing efficiency, and enhancing safety.

Funding and Future Directions

The research received significant support from the National Science Foundation Graduate Research Fellowship Program under Grant No. DGE 2140743, alongside grants from the Simons Foundation and the Henri Seydoux Fund. This crucial financial backing underscores the recognized importance of addressing fundamental challenges in robotics and complex systems.

The study concludes by emphasizing new directions for emergent traffic research. By integrating ideas from physics and engineering with advanced simulations and experiments, the work advocates for further exploration into robust, decentralized navigation methods for crowded environments. The success of a simple reactive scheme over computationally costly central planners up to moderate densities motivates the development of AI and robotics that embrace inherent variability as a feature, not a bug, ultimately leading to more adaptable, resilient, and efficient autonomous systems that can navigate the complexities of our increasingly crowded world.

About this robotics and neurotech research news

Author: Anne Manning

Source: Harvard

Contact: Anne Manning – Harvard

Image: The image is credited to Lucy Liu / Harvard SEAS

Original Research: Closed access.

“Noise-enabled goal attainment in crowded collectives” by Lucy Liu, Justin Werfel, Federico Toschi, and L. Mahadevan. PNAS

DOI:10.1073/pnas.2519032123

Abstract

Noise-enabled goal attainment in crowded collectives

In crowded environments, individuals must navigate around other occupants to reach their destinations. Understanding and controlling traffic flows in these spaces is relevant for coordinating robot swarms and designing infrastructure for dense populations.

Here, we use simulations, theory, and experiments to study how adding stochasticity to agent motion can reduce traffic jams and help agents travel more quickly to prescribed goals. A computational approach reveals the collective behavior.

Above a critical noise level, large jams do not persist. From this observation, we analytically approximate the swarm’s goal attainment rate, which allows us to solve for the agent density and noise level that maximize the goals reached.

Robotic experiments corroborate the behaviors observed in our simulated and theoretical results. Finally, we compare simple, local navigation approaches with a sophisticated but computationally costly central planner.

A simple reactive scheme performs well up to moderate densities and is far more computationally efficient than a planner, motivating further research into robust, decentralized navigation methods for crowded environments.

By integrating ideas from physics and engineering using simulations, theory, and experiments, our work identifies new directions for emergent traffic research.