The traditional pillars of higher education—sustained focus, deep analysis, and the methodical consumption of long-form media—are facing a systemic crisis as students increasingly struggle to engage with material exceeding the length of a short-form video. Recent reports from faculty at prestigious institutions across the United States indicate a sharp decline in what experts call "cognitive patience," a development that many attribute to the pervasive influence of smartphone technology and the algorithmic delivery of information. This phenomenon, once considered a peripheral concern regarding leisure habits, has now moved into the center of academic discourse, prompting a reevaluation of how the human brain processes complex narratives in a digital age.

The Crisis in the Cinematic Classroom

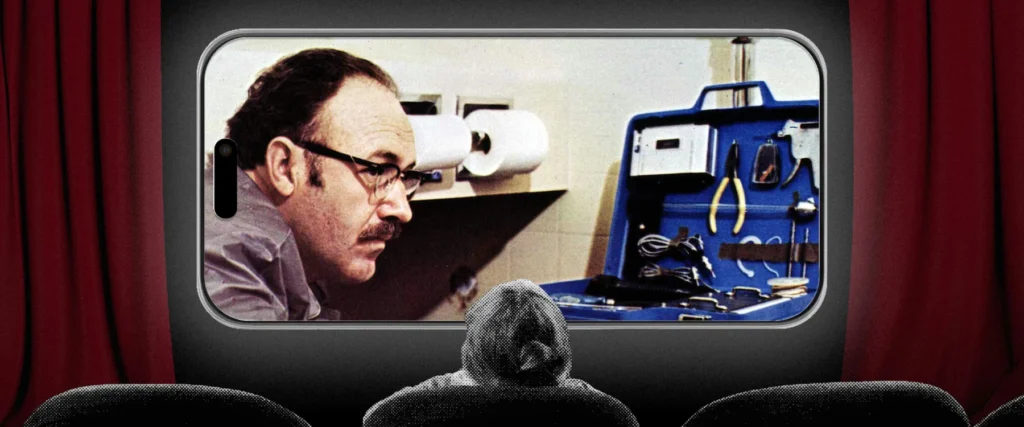

In a report recently published by The Atlantic, journalist Rose Horowitch detailed a growing consensus among film studies professors: a significant portion of the current student body can no longer sit through a feature-length motion picture. This trend represents a fundamental shift in pedagogical efficacy. Craig Erpelding, a professor of film at the University of Wisconsin at Madison, noted that while film assignments used to be considered the "best homework ever," contemporary students frequently fail to complete them.

This observation is not isolated. Horowitch interviewed 20 film-studies professors nationwide, all of whom reported a precipitous drop in student attention spans over the last decade. The decline reportedly reached a critical inflection point following the COVID-19 pandemic, which accelerated the integration of digital devices into every facet of daily life. At Tufts University, the founding director of Film and Media Studies reported that even strict bans on electronics during screenings are increasingly unenforceable, with students compulsively checking devices in the dark. Similarly, at the University of Southern California (USC), faculty have likened the behavior of students separated from their phones to "nicotine addicts going through withdrawal," characterized by physical fidgeting and visible distress.

A Chronology of Declining Attention

The current state of cognitive fragmentation is the result of a two-decade shift in how information is consumed. The timeline of this erosion can be traced through the evolution of consumer technology:

- 2007–2012: The Proliferation Phase. The introduction of the smartphone and the subsequent rise of the "app economy" began the process of fragmenting daily focus. During this period, the average person began transitioning from periodic internet usage on desktop computers to "always-on" connectivity.

- 2013–2019: The Algorithmic Shift. The transition of social media platforms from chronological feeds to algorithmic, engagement-based models prioritized short, high-stimulation content. Platforms like Vine and later TikTok began conditioning users for sub-60-second content cycles.

- 2020–2022: The Pandemic Acceleration. The move to remote learning and social isolation forced a near-total reliance on digital interfaces. Educational psychologists suggest that the lack of physical classroom accountability during this period allowed distracted habits to become deeply ingrained.

- 2023–Present: The Institutional Recognition. Higher education institutions are now grappling with the fallout, as the first "digitally native" cohorts who spent their formative teenage years in a high-stimulation environment enter advanced degree programs.

The Neurobiology of Cognitive Patience

The scientific community identifies this loss of focus as a degradation of "cognitive patience." Coined by reading scholar Maryanne Wolf, the term refers to the ability to maintain focused, sustained attention and delay gratification while resisting the urge to multitask. Wolf, author of Reader, Come Home: The Reading Brain in a Digital World, argues that the human brain is plastic and adapts to the environment in which it operates.

The biological mechanism driving this change involves the brain’s short-term reward system. Smartphones activate neuronal bundles that anticipate high expected value from new notifications or infinite scrolling. These bundles effectively "vote" for distracting behavior by triggering a cascade of neurochemicals, such as dopamine, which the individual experiences as a powerful motivation to check their device. Over time, as the brain is repeatedly rewarded for "task-switching" and seeking novelty, the neural pathways required for sustained concentration begin to weaken from disuse. The result is a physiological discomfort with silence, stillness, and long-form narrative arcs.

Data and Supporting Evidence

While anecdotal evidence from professors is compelling, broader data supports the theory of a shrinking collective attention span. A study conducted by Microsoft researchers found that the average human attention span dropped from 12 seconds in the year 2000 to approximately eight seconds in 2015—a figure often cited to highlight the impact of the digital age, though some psychologists argue the issue is more about "selective attention" than a literal reduction in capacity.

Furthermore, a 2023 report on digital habits indicated that the average Gen Z user spends upwards of seven hours a day on their smartphone, with a significant portion of that time dedicated to "micro-content." This constant stream of high-velocity information creates a "filter bubble" of stimulation that makes traditional media, such as a 120-minute film or a 300-page novel, feel agonizingly slow by comparison.

Reclaiming the Brain: The Cinematic 5k

In response to this crisis, some experts are proposing a "training" model to help individuals regain their cognitive autonomy. The concept suggests using the very medium that students are currently struggling with—film—as a tool for neurological rehabilitation. Much like a sedentary individual might train for a 5k run, those with fragmented attention spans can view the viewing of a full-length film as a milestone of mental endurance.

To facilitate this "cinematic cognitive patience," several strategies have been suggested:

- Environmental Decoupling: Placing the smartphone in a separate room to eliminate the "proximal pull" of the device.

- Intentional Selection: Choosing films that require active engagement rather than "background" viewing, thereby forcing the brain to track complex subplots and character development.

- Incremental Exposure: Starting with shorter, high-intensity narratives and gradually moving toward slower-paced, "contemplative" cinema.

This approach acknowledges the irony of using one screen to combat the effects of another but posits that the structure of a film—with a beginning, middle, and end—serves as a necessary bridge back to deep literacy and sustained focus.

Broader Implications: From Classrooms to the Economy

The implications of this attention crisis extend far beyond the walls of film departments. If the future workforce lacks the cognitive patience to engage with complex material, the productivity and innovation of the white-collar economy may be at risk. This concern coincides with the rise of Generative Artificial Intelligence (AI), which some fear will exacerbate the problem by providing "summaries" and "shortcuts" that further remove the need for deep engagement.

However, some analysts caution against "vibe reporting"—a term used to describe media trends that rely on anecdotal anxiety rather than empirical data. For instance, recent reports titled "The Worst-Case Future for White-Collar Workers" suggest that AI will imminently replace entry-level roles because young workers can no longer perform the deep work required to compete with machines. While AI will undoubtedly disrupt the job market, particularly in software development, the magnitude of this shift remains unproven.

A recent reporting project involving over 300 computer programmers revealed that while AI tools like GitHub Copilot are changing how code is written, the human element of "architectural thinking"—which requires immense cognitive patience—remains irreplaceable. The danger, therefore, is not necessarily that AI will replace humans, but that humans will lose the cognitive capacity to manage and direct the AI effectively.

Conclusion and Future Outlook

The struggle of film students to sit through movies is a "canary in the coal mine" for a broader societal shift. As the digital environment continues to prioritize speed and brevity, the preservation of cognitive patience becomes a radical act of self-regulation. Educational institutions are now at a crossroads: they must decide whether to adapt their curricula to shorter attention spans or to double down on the "slow" methods of the past as a form of essential mental training.

The fight for depth in an age of distraction is not merely an academic exercise; it is a necessary effort to maintain the human capacity for complex thought, empathy, and long-term planning. Whether through the "patient joys of movies" or the rigorous demands of deep reading, reclaiming the ability to focus is becoming the defining challenge of the 21st century.